Brains to Brawn

While hyperscalers (Amazon -AMZN, Alphabet- GOOGL, Meta – META, and Microsoft – MSFT) and NVIDIA (NVDA) continue to command headlines and trillions in market valuation, the real stock market action has shifted toward smaller companies solving critical datacenter bottlenecks. The first AI bottleneck was Nvidia chips, the brains of AI, but the spectacular growth of AI has ballooned the physical scale of data centers, creating infrastructure constraints. The brawn, like power management, cooling, and network speed, are now the bottlenecks to AI development and have become the key areas of industry and investor focus.

The AI arms race is moving past the focus from merely buying chips to creating the physical capacity to run them. The massive infrastructure spend by hyperscalers—often seen as maintenance to keep up with faster AI hardware—is hitting physical limits. As a result, the companies addressing these bottlenecks are the new AI darlings of Wall Street.

Key Data Center Bottlenecks:

We asked Google AI to describe some of the key bottlenecks and this was the response:

Networking and Interconnects

In modern AI, the network is the computer. Unlike traditional cloud apps that talk to the internet, AI training requires thousands of GPUs to talk to each other simultaneously to share data.

-

-

- The “East-West” Traffic Jam AI generates massive amounts of internal (East-West) traffic between nodes. If one cable or switch lags, the entire multi-billion dollar GPU cluster sits idle, a phenomenon known as “tail latency”.

- The Copper vs. Fiber Trade-off: Traditional copper cables are cheap and power-efficient but can only reach about 2 meters. To build larger clusters, engineers must switch to optical fiber, which is expensive, power-hungry, and more prone to failure.

- The 800G Standard To keep up, datacenters are rapidly upgrading to 800G and 1.6T speeds, requiring entirely new classes of transceivers and switches.

-

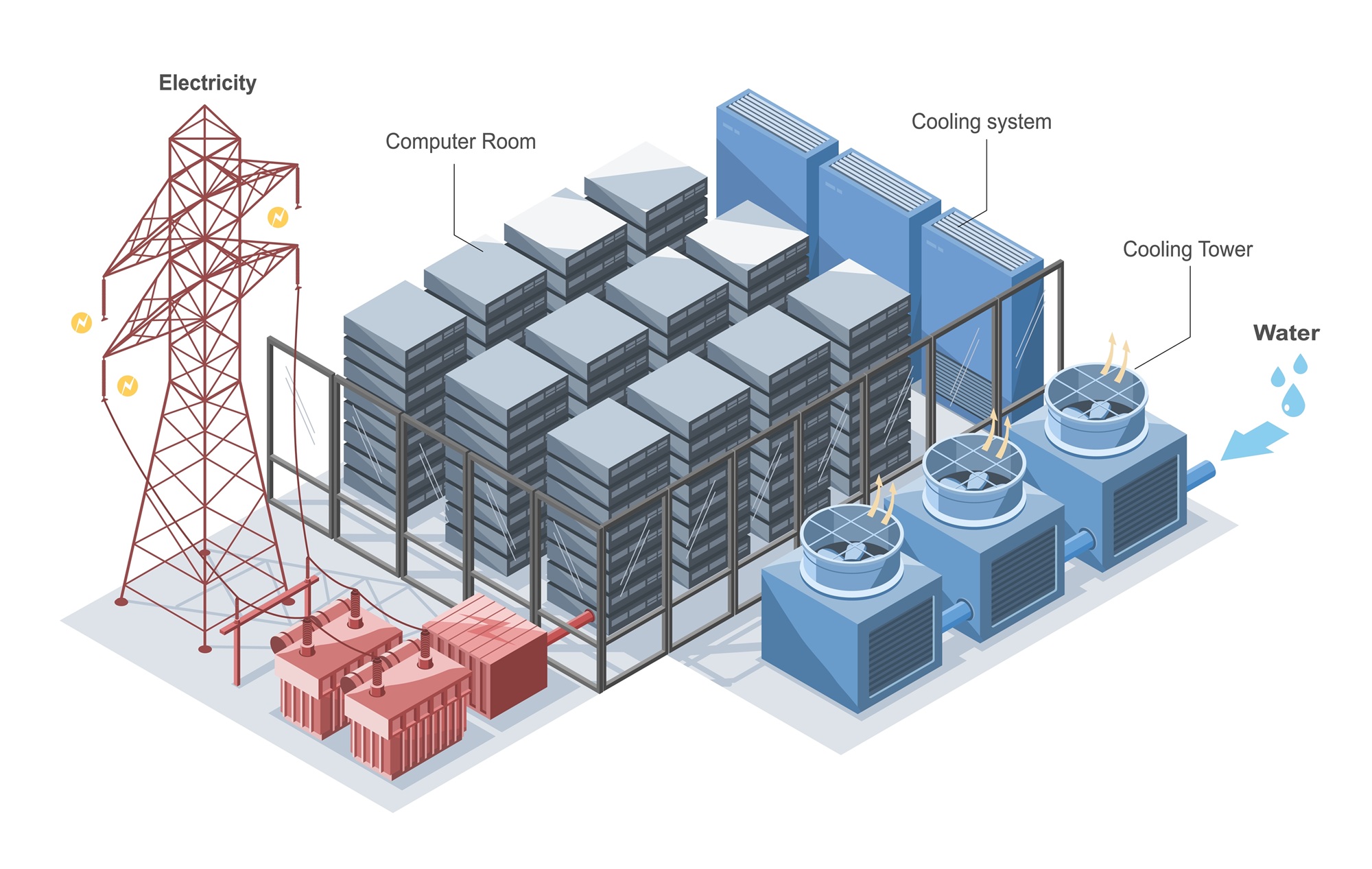

Power and Cooling

AI chips don’t just compute; they convert massive amounts of electricity into heat. This creates a “thermal wall” that legacy facilities cannot handle.

-

-

- Grid Gridlock: Many AI projects are delayed for years because the local electrical grid simply cannot supply the 100+ megawatts required by a single mega-site. Operators are now scouting locations based on power availability rather than fiber proximity.

- Rack Density Explosion: Traditional server racks draw 5–10 kW of power. Modern AI racks (like NVIDIA’s Blackwell) can demand over 100 kW per rack, with some designs pushing toward 400 kW.

- The Death of Air Cooling: At these densities, blowing cold air through a room is no longer enough. Companies are forced to move to direct-to-chip liquid cooling or immersion cooling (submerging servers in fluid), which requires a total redesign of datacenter plumbing and architecture.

-

Component Supply Chains

Even if you have the GPUs and the power, you still need thousands of “boring” but essential parts that are currently in short supply.

-

-

- The “Electrical Gear” Shortage: Massive delays are caused not by chips, but by the lack of transformers, switchgear, and power distribution units (PDUs). These heavy industrial components often have lead times exceeding a year.

- Material Overlap: The AI boom is competing with the green energy transition for materials like copper (used in cables and power grids) and high-purity silicon. Experts predict a 30% gap in copper supply by 2035.

- Specialized Components: Items like High Bandwidth Memory (HBM) and advanced optical transceivers have highly concentrated supply chains, making the entire industry vulnerable to single-point failures.

-

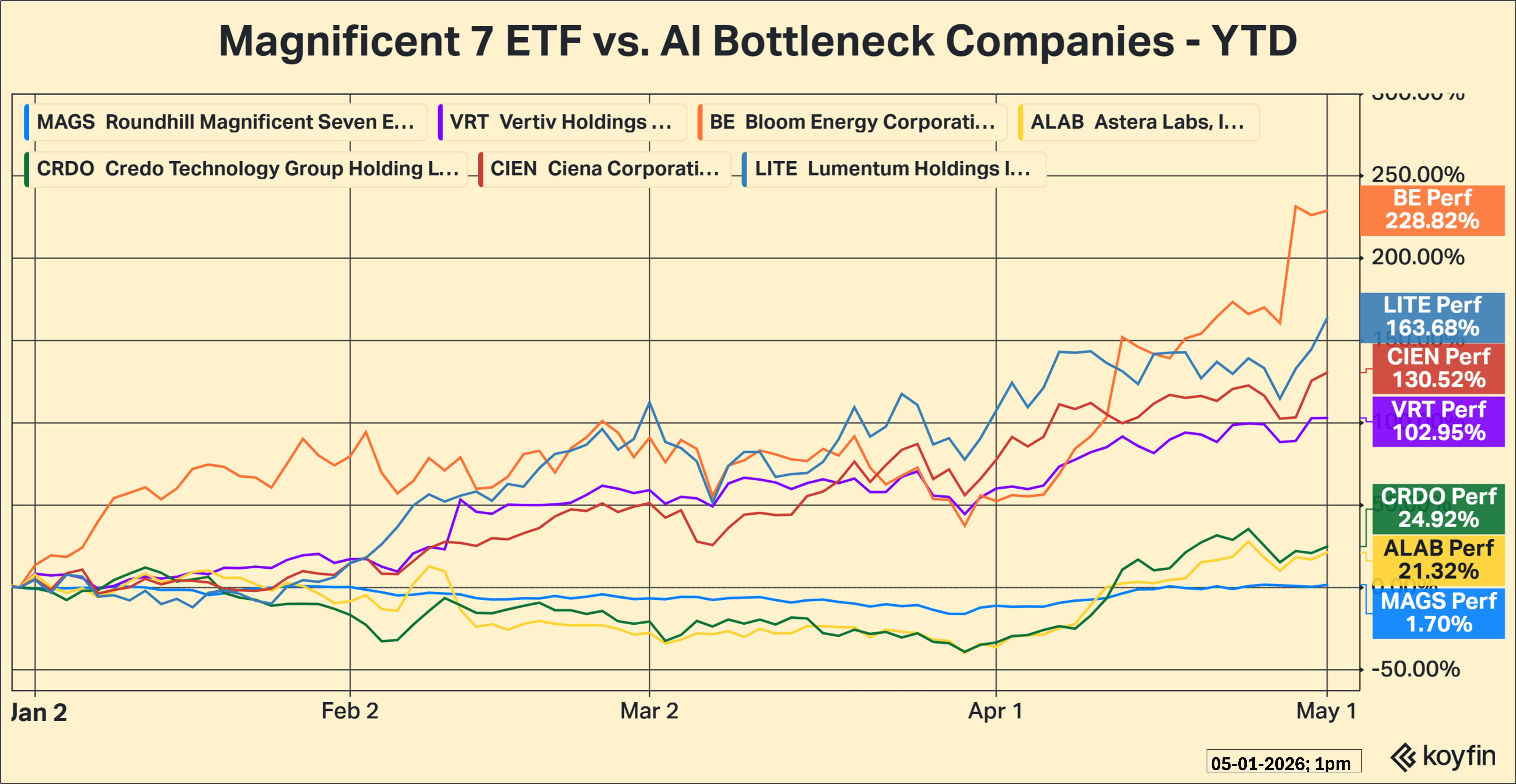

It has not taken long for the stock market to recognize this change and that is clear from the chart below, which shows the stock market performance of the Magnificent7 ETF (MAGS) with a handful of key ‘bottleneck stocks’, specifically, Vertiv (VRT – power infrastructure), Bloom Energy (BE – power generation), Astera Labs (ALAB – networking & memory), Credo (CRDO – high speed connectivity), Lumentum (LITE – optical and photonics products) and Ciena (CIEN – optical networking).

The race among countries and companies to dominate artificial intelligence has created a growth curve that we have never experienced before. Advances that would normally take decades to develop are being compressed into years and it is straining the physical and technical capacity to meet this demand. Many of the technical aspects are better able to pace because the only requirement is engineering skill. But physical aspects, like transformer availability, require new manufacturing facilities and new sources of raw materials, which can take a very long time to develop. Although the headlines continue to be filled with news about the AI hyperscalers, the chart makes it clear that the next wave of investment is already moving toward those solving the physical bottlenecks of AI.

Have a great week!

What We’re Reading

-

60 Minutes Extended interview: Ben Sasse on lessons for America (40 min video – highly recommended)

-

Cramer says the market powered through a tough earnings week but ‘that doesn’t mean we’re out of the woods yet’

-

Fed dissenters explain ‘no’ votes, saying they disagreed with hinting next move would be a cut

-

Core inflation rate hit 3.2% in March as first-quarter growth disappointed at 2%

Palumbo Wealth Management (PWM) is a registered investment advisor. Advisory services are only offered to clients or prospective clients where PWM and its representatives are properly licensed or exempt from licensure. For additional information, please visit our website at www.palumbowm.com.

The information provided is for educational and informational purposes only and does not constitute investment advice and it should not be relied on as such. It should not be considered a solicitation to buy or an offer to sell a security. It does not take into account any investor’s particular investment objectives, strategies, tax status, or investment horizon. You should consult your attorney or tax advisor.

The views expressed in this commentary are subject to change based on market and other conditions. These documents may contain certain statements that may be deemed forward‐looking statements. Please note that any such statements are not guarantees of any future performance and actual results or developments may differ materially from those projected. Any projections, market outlooks, or estimates are based upon certain assumptions and should not be construed as indicative of actual events that will occur.

All information has been obtained from sources believed to be dependable, but its accuracy is not guaranteed. There is no representation or warranty as to the current accuracy, reliability, or completeness of, nor liability for, decisions based on such information and it should not be relied on as such.

All information has been obtained from sources believed to be dependable, but its accuracy is not guaranteed. There is no representation or warranty as to the current accuracy, reliability, or completeness of, nor liability for, decisions based on such information, and it should not be relied on as such.

The views expressed in this commentary are subject to change based on the market and other conditions. These documents may contain certain statements that may be deemed forward‐looking statements. Please note that no such statements are guarantees of any future performance, and actual results or developments may differ materially from those projected. Any projections, market outlooks, or estimates are based upon certain assumptions and should not be construed as indicative of actual events that will occur.

Past performance is no guarantee of future returns.

AI, AI bottlenecks, AI Interconnects, AI power and cooling, AI WinnersBy: Adam